Daydreamer, Short-Form Regenerative AI Video Experiments

I set out to build a controllable AI filmmaking tool.

The goal was not to make trippy short clips for social media. The goal was to build something more like a real filmmaking system, something with repeatable shots, structured scene control, camera motion, and results you could direct instead of guess.

I started with a frame-by-frame Stable Diffusion approach driven by 3D scene data from game engines. That gave me a way to bring in structure, depth, motion, and camera framing.

Then I ran into the real problem:

temporal stability.

The system could produce great individual frames, but keeping those frames coherent over time was much harder. So I started adding more and more machinery to stabilize it.

I tried depth maps, edge maps, IP Adapter style guidance, segmentation masks, trust masks, motion warp blending, alpha blending, and a whole set of automation and scoring tools to keep the renders from collapsing into mush. I even started tracking mush scores as a way to measure when the sequence was losing coherence.

All of that work taught me something important.

This particular architecture can only be pushed so far.

If I really want the controllable AI filmmaker tool I have in mind, I need to move toward a more video-centric rendering model instead of forcing a stitched single-frame system to do a job it was not really built to do.

But while I was pushing on that problem, I found something interesting.

When I increased denoising and leaned into the feedback loop, the system started inventing new things and then remembering them. It would create shapes, costumes, textures, and architectural details that were never in the source scene, then carry them forward for a while and play with them. Watching that happen was fun in a way I did not expect.

That detour became Daydreamer.

What Daydreamer Is

Daydreamer is a short-form regenerative AI video experiment built out of that discovery.

It is not the final product. It is not the destination. It is a visible branch of the work, a way to explore what happens when structure, music, and feedback are allowed to interact in a more expressive way.

Instead of trying to lock everything down, Daydreamer lets the system drift, invent, and evolve, while still being guided by scene structure, prompts, masks, and timing.

That made it possible to turn the pipeline into short clips that people could actually watch and respond to.

And people did respond.

The Sand Temple clip got a better reaction online than I expected. That pushed me further into portrait mode, beat-driven edits, and shorter pieces designed for phones and social sharing.

Music Makes It Move

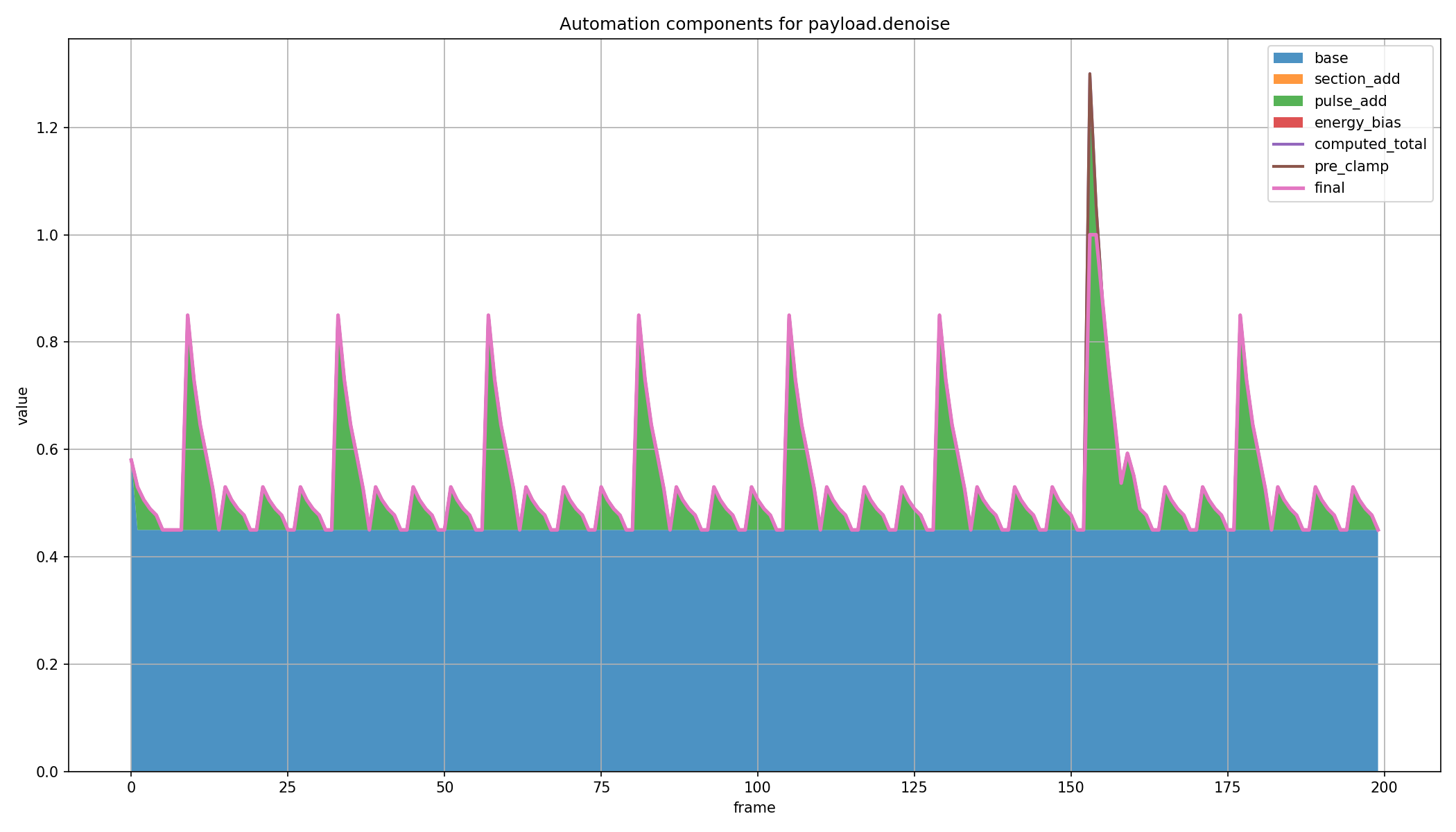

One of the strongest parts of this workflow came from connecting render settings to music.

I started dynamically changing denoising, CFG, and other weights on beats and downbeats. In effect, the music becomes part of the control system. On strong beats, the render gets pushed harder and invents more. On lighter beats, it settles back down.

That is what gives some of these clips their pulsing, exploratory quality. It is not just random hallucination. The timing is being driven.

The result is still not fully controllable in the way I ultimately want, but it is expressive, visually surprising, and much more alive than a static render pipeline.

Why I Am Continuing It

Daydreamer is useful for two reasons.

First, it is a real creative outlet. The clips are fun to make, fun to watch, and they reveal behaviors in the system that I would not notice from still frames or technical logs alone.

Second, it is a showcase of what I have built so far. It gives people something immediate to see while I continue working toward the larger goal.

That larger goal is still Shotmaker.

Shotmaker is the tool I actually want to build, a more controllable AI filmmaking system where scene structure, camera motion, and visual variation can be directed with much more reliability.

Daydreamer is the sidetrack. Shotmaker is still the destination.

What Comes Next

I want to keep pushing both sides of this work.

On one side, I want to keep producing short-form Daydreamer clips, because they are engaging, they get attention, and they show the system in motion.

On the other side, I want to keep moving toward a more serious filmmaking architecture, one that is less dependent on frame stitching and better suited for repeatable, directed results.

That next step will take more development time, more iteration, and more compute.

But the path is clearer now than it was before.

I know what I was trying to make.

I know where this architecture starts to break down.

And I know that even the detour taught me something worth keeping.

You can see the current experiments here: